| « Accidental Complexity | C++: Template Meta-Programming 2.0 » |

A continuation of a series of blog entries that documents the design and implementation process of a library. The library is called, Network Alchemy[^]. Alchemy performs low-level data serialization with compile-time reflection. It is written in C++ using template meta-programming.

My second attempt to create a bit-field type was more successful. The size of the container only grew linearly with each sub-field that was added, and the implementation was cleaner. However, I showed an image of what this implementation looked like in the debugger and it was very in convenient. The thing I was concerned with the most was the pitiful performance that was revealed by my benchmark tests.

This entry describes my discoveries and the steps that I took to re-invent the bit-field type in Alchemy for the third time. This is also the current implementation in use by Alchemy, which is about 10% faster than hand-coded collection of packed-bits.

Overview

Let's start with a brief review. The PackedBits type in Alchemy essentially replaces C-style bit-fields. Bit-fields are very common with network protocols, which attempt to make every bit count that is sent across the wire.

C and C++ both have a syntax to access values within a byte at the bit level, the bit_field. Unfortunately, the standard for both languages leave the rules for implementation undefined. Therefore, your code may not be portable to a different compiler for your same architecture.

Alchemy's PackedBits provides an abstraction to portably allow bit-level access for message protocols and storage structures.

The poor performance of PackedBits v2

The implementation for version 2 of PackedBits was a simplification of the original design. The first implementation had a fixed size of 32 entries, which always existed. Therefore they always took up space, and they were initialized and destroyed with each PackedBits type. This made them a very expensive type in space and speed.

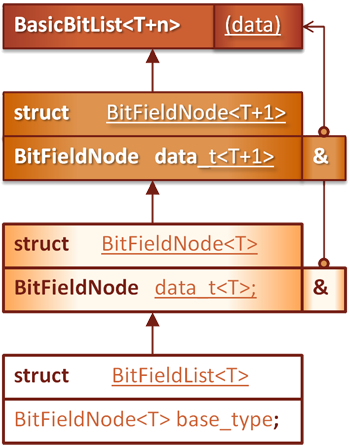

Version 2 solved the hard-coded limit by using recursion and inheritance to handle each BitFieldNode. Unfortunately, there are two aspects of this design that are bad.

Inconvenient Debugging

Since each but field inherits from the next field in the chain, you had to navigated to each sub-class to inspect the value of a particular node. They were not all displayed at once. This is actually a minor inconvenience because some tools like Visual Studio allow you to create custom visualizers for your types.

Design does not scale

There is a challenge in creating a type like this. Abstraction provides an illusion to the user that they are interacting with a single type that provides the expressive syntax of value types in a struct. Yet, these are distinct objects composited that are structured to interact and change specific bits on a single data type.

I achieved this in the original design, by passing a reference to the data field in the PackedBits container, to the BitFieldNodes. The mask and shift operations are then defined by the template instantiation and used on the referenced value.

This created a valid solution. The drawback is that for every new bit-field added to the type, a new reference was added as well. This is a reference that will take up additional space, and will need to be initialized. It turns out, the initialization of these references was killing performance.

Whenever a copy of the type was required, the reference for each node had to be initialized. The worst part is that this data type only manages up to the word-size of the platform you are running on. A 32-bit integer specified to have 32 1-bit flags, would required 32 references and the data value to be initialized for each copy.

|

This implementation had to go!

The Solution

I had know of and considered an alternative solution many times. However, for my original implementation of Alchemy I steered clear of this solution. The reasons were the strict coding guidelines that we followed. My first implementation of what is now called Hg, followed the JSF++ coding guidelines.

A quandary

The quandary was simple. Even though I am now developing this independent open-source library, do I want to use the solution that I had often considered?

The solution was to use the offsetof MACRO defined in the standard library. This MACRO can become tenuous, especially as your object hierarchy becomes more complicated. Luckily I haven't run into any problems yet. Hopefully with the controlled implementation I can keep it that way.

What does offsetof do, and how does it work? This solution must be a macro because it inserts the names of sub-fields from a struct or a class to calculate the offset of that data elements address from the base address of the parent type.

My final decision

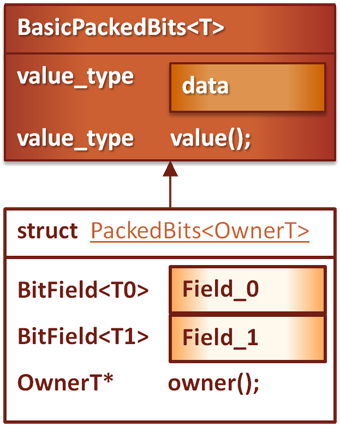

Yes, of course I decided to use offsetof. Basically, I can now group all of the bit-field children at the same level in the PackedBits parent type. I now pass in the parent type to the BitField object as part of its instantiation. This allows the child to compare itself to its parent and find its location relative to the base pointer.

C++

template< class OwnerT, | |

class TagT, | |

size_t OffsetT, | |

size_t CountT, | |

class T = uint8_t | |

> | |

struct BitField | |

{ | |

// Generally the same logic in previous | |

// implementations for shift, mask, read and store. | |

// ... | |

}; |

A member-function of PackedBits is called in order to be able to reference the correct data element. This function is called value(). The trick is, "how to get a pointer to the child types parent object without requiring initialization.

C++

// Continued implementation of BitField: | |

| |

value_type& value() | |

{ | |

return owner()->value(); | |

} | |

| |

// This function returns a pointer to the parent object. | |

OwnerT* owner() | |

{ | |

return reinterpret_cast<OwnerT*>( | |

reinterpret_cast<char*>(this) - TagT::offset()); | |

} |

This adjustment, as well as the change in code-generation performed by the pre-preprocessor form the solution for version 3 of the PackedBits type.

|

A challenge with cross-compiling

When I went to cross-compile and verify the implementation on Linux with gcc, the compiler complained. It believed that it was de-referencing a pointer to 0. My guess is that since it was a template and not an actual value-type, the calculations were performed at the base address of 0, which worked out quite nicely for me.

How did I appease gcc? Look away if you are a purist:

C++

#ifdef __GNUC__ | |

| |

// Yes, we're redefining this MACRO... | |

#ifdef offsetof | |

#undef offsetof | |

#endif | |

| |

// GCC does not approve of the way in which | |

// Alchemy employs this MACRO. | |

// This is a slight alteration: | |

// Performing the calculation on a non-null value, | |

// then re-adjust back to zero after the offset calculation. | |

#define offsetof(type,member) \ | |

(size_t)reinterpret_cast<const char*>((((type*)1)->member)-1); | |

| |

#endif |

Are you ready for my rationalization? It's just like the C++ Standard Library and its treatment of addresses and iterators. It's safe to calculate and store and address one past the end, but it's not safe to dereference that address. We get an offset from this calculation, and we apply it to a known valid base-pointer.

Summary

After an abysmal first showing, I was very pleased when I saw the results from this re-implementation of the PackedBit type. Not only is the design simpler and easier to maintain, but it also performs about 10 to 12% faster than the hand-coded implementation in the benchmark tests that I have created.

I did resort to using the offsetof MACRO, which is prohibited by some coding standards. I do not expect an issues to arise because of the simple context where this calculation is used. There are no virtual functions, or complex class hierarchies to consider. However, I am keeping an eye on its behavior.

Recent Comments